AI Is Already Embedded in Your Enterprise Tools — Often Without Explicit Contro

AI capabilities are increasingly embedded within enterprise platforms such as Copilot, ServiceNow, Zoom, and other SaaS applications.

In many cases:

- AI features are enabled by default or introduced through updates

- Data flows through prompts, logs, and integrations without clear visibility

- Organisations do not fully understand where their data is processed or retained

This creates a critical concern:

Enterprise data is interacting with AI systems without clear ownership of risk and control.

AI Risk is Distributed — Accountability is Not

Enterprise AI systems operate across multiple layers — model providers, platforms, integrations, data pipelines, and enterprise environments.

Risk does not sit in one place.

Responsibility is distributed.

However:

Accountability for outcomes remains with the enterprise.

This creates a structural gap:

- Vendors control parts of the system

- Enterprises remain accountable for outcomes

Bridging this gap requires:

- Clear shared responsibility mapping

- Enforceable contractual clauses aligned to that responsibility

Why Traditional Contracts and Cloud Models Are Insufficient

Cloud shared responsibility models focus on infrastructure.

AI systems introduce additional complexity:

- Dynamic and evolving behaviour

- Prompt-driven execution

- Data dependency across lifecycle stages

- Autonomous or semi-autonomous actions

As a result:

- Responsibility is not binary (vendor vs customer)

- Most controls are shared

- Contracts must reflect operational control ownership, not generic liability

Silent AI Adoption in SaaS Environments

AI is increasingly embedded across enterprise tools.

Usage is often:

- Implicit

- User-driven

- Not centrally governed

Typical concerns include:

- Data exposure through prompts and outputs

- Lack of visibility into processing and storage

- No clear audit trail of interactions

- Uncertainty around vendor data usage

This is not a vendor problem alone — it is a shared responsibility issue.

AI Responsibility Layers and Contractual Implications

AI systems span multiple layers, each with distinct risks and contractual priorities:

| Layer | Examples | Contractual Focus |

|---|---|---|

| AI SaaS / Applications | Copilot, ChatGPT Enterprise | Data usage, access control, logging |

| Foundation Models / APIs | LLM providers | Model transparency, updates |

| MLOps Platforms | Hosting, pipelines | Monitoring, change management |

| Integrators | Agents, workflows | Orchestration controls |

| Data Layer | RAG, enterprise data | Data governance, lineage |

| Runtime / Edge | User interactions | Usage control, auditability |

| Self-hosted | Internal models | Full control ownership |

Control Ownership is Predominantly Shared — Contracts Must Reflect This

Most AI controls are neither vendor-owned nor enterprise-owned alone.

They are shared controls and require explicit contractual definition.

Illustrative mapping:

- Model security: shared responsibility, must define limitations

- Data governance: enterprise owned, restrict data usage and training

- Identity and access: shared, define configuration responsibility

- Monitoring and logging: shared, ensure log availability and retention

- Model validation: shared, require validation evidence

- Fairness and bias: shared, define testing and accountability

- Incident response: shared, define timelines and obligations

- Transparency: shared, require disclosures and documentation

- Risk acceptance: retained by enterprise

Key Insight

Most AI risks arise in the integration layer — not in the model.

This includes:

- Prompt orchestration

- RAG pipelines

- Tool integrations

- Workflow automation

These are typically:

- Configured by the enterprise

- Supported by vendors

- Poorly defined in contracts

Silent AI Adoption in SaaS Environments

- AI is increasingly embedded across enterprise tools

- Usage is often:

- Implicit

- User-driven

- Not centrally governed

Typical concerns include:

- Data exposure through prompts and outputs

- Lack of visibility into processing and storage

- No clear audit trail of interactions

- Uncertainty around vendor data usage

This is not a vendor problem alone — it is a shared responsibility issue.

Control Ownership is Predominantly Shared — Contracts Must Reflect This

Most AI controls are neither vendor-owned nor enterprise-owned alone.

They are shared controls, requiring explicit contractual definition.

Illustrative Mapping

| Control Area | Supplier | Customer | Contractual Requirement |

|---|---|---|---|

| Model Security | ✔ | ✔ | Define shared responsibility and limitations |

| Data Governance | ✖ | ✔ | Restrict data usage and training rights |

| Identity & Access | ✔ | ✔ | Define configuration responsibility |

| Monitoring & Logging | ✔ | ✔ | Ensure log availability and retention |

| Model Validation | ✔ | ✔ | Require validation evidence |

| Fairness & Bias | ✔ | ✔ | Define testing and accountability |

| Incident Response | ✔ | ✔ | Define timelines and obligations |

| Transparency | ✔ | ✔ | Require disclosures and documentation |

| Risk Acceptance | ✖ | ✔ | Retained by enterprise |

Key Insight

Most AI risks arise in the integration layer — not in the model.

This includes:

- Prompt orchestration

- RAG pipelines

- Tool integrations

- Workflow automation

These are typically:

- Configured by the enterprise

- Supported by vendors

- Poorly defined in contracts

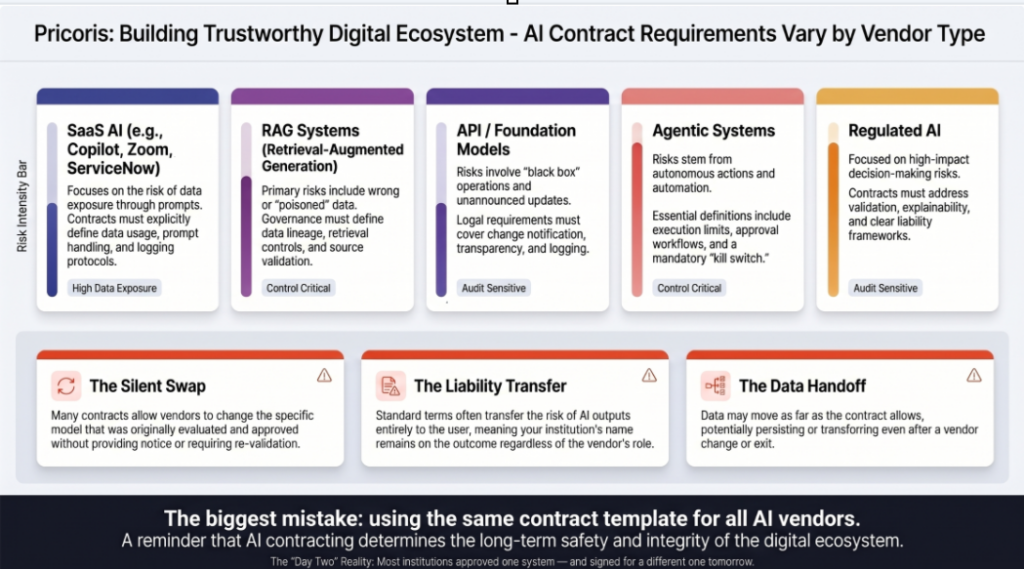

Differentiating Contractual Clauses by AI Vendor Type

Clause priority must be aligned to risk exposure.

1. General-Purpose AI SaaS (Copilot, GenAI platforms)

Risk

- Broad access to enterprise data

- Prompt-driven exposure

- Limited visibility

Critical Clauses

- Data usage restrictions (no training on enterprise data)

- Prompt/output logging

- Access control enforcement

- Disclosure of model limitations

- Incident reporting

2. RAG-Based Systems

Risk

- Data poisoning

- Retrieval errors

- Manipulated outputs

Critical Clauses

- Data lineage and validation

- Retrieval filtering

- Restrictions on ingestion

- Monitoring for anomalies

3. Foundation Model / API Providers

Risk

- Black-box behaviour

- Uncontrolled updates

Critical Clauses

- Model update notifications

- Performance transparency

- Logging support

- Service continuity

4. Agentic and Automation Systems

Risk

- Autonomous actions

- Operational impact

Critical Clauses

- Tool execution limits

- Human oversight requirements

- Kill switch mechanisms

- Action audit logs

5. Regulated / High-Impact AI

Risk

- Decision impact

- Compliance exposure

Critical Clauses

- Validation obligations

- Explainability requirements

- Liability allocation

- Regulatory alignment

6. Integrated / Custom AI Systems

Risk

- Orchestration failures

- Control gaps

Critical Clauses

- Control ownership clarity

- Integration-level accountability

- End-to-end traceability

- Testing obligations

Core Contractual Areas Across All AI Systems

Data Usage and Protection

- Restrict use for model training

- Define prompt/output handling

- Address retention and cross-border flows

Transparency and Explainability

- Disclosure of model limitations

- Access to logs and documentation

Monitoring and Incident Management

- Logging requirements

- Incident reporting timelines

- AI misuse and failure handling

Model Updates and Change Management

- Notification of changes

- Impact assessment

- Rollback mechanisms

Audit and Assurance

- Access to evidence

- Validation support

- Audit rights (where feasible)

Liability and Risk Allocation

- Define responsibility for outcomes

- Avoid complete risk transfer without control

From Shared Responsibility to Enforceable Contracts

A shared responsibility model is only effective when:

- Translated into contractual clauses

- Mapped to operational controls

- Supported by monitoring and testing

Without this:

- Responsibility remains theoretical

- Contracts do not reduce risk

Alignment with ISO/IEC 42001

This integrated approach supports:

- Clause A.10 (AI systems provided by external providers) requires organisations to:

- Define responsibility for externally provided AI

- Establish contractual controls

- Ensure monitoring and validation of vendor AI systems

- Vendor risk classification

- Allocation of control ownership

- Audit traceability and evidence

From Responsibility to Accountability

Organisations must ensure:

- Controls are implemented

- Controls are tested

- Evidence is generated

- Decisions are traceable

Without this:

- Governance frameworks fail in practice

- Audit readiness is not achieved

Business Outcomes

- Clear allocation of AI risk and responsibility

- Stronger vendor governance

- Reduced reliance on vendor assumptions

- Improved audit and regulatory readiness

Conclusion

AI governance cannot rely on:

- Vendor assurances alone

- Generic contractual clauses

- Traditional shared responsibility models

It requires an integrated approach where:

- Responsibility is clearly defined

- Contracts reflect actual control ownership

- Controls are validated and evidenced

The risk is not that AI is being used — the risk is that it is being used without visibility, ownership, and validation.

Assess Your AI Vendor Contracts and Responsibility Model

The risk is not that AI is being used — the risk is that it is being used without visibility, ownership, and validation.

Ensure your agreements and governance frameworks align with how AI systems actually operate.